Apache directory studio kerberos sasl principal

- #APACHE DIRECTORY STUDIO KERBEROS SASL PRINCIPAL HOW TO#

- #APACHE DIRECTORY STUDIO KERBEROS SASL PRINCIPAL SERIES#

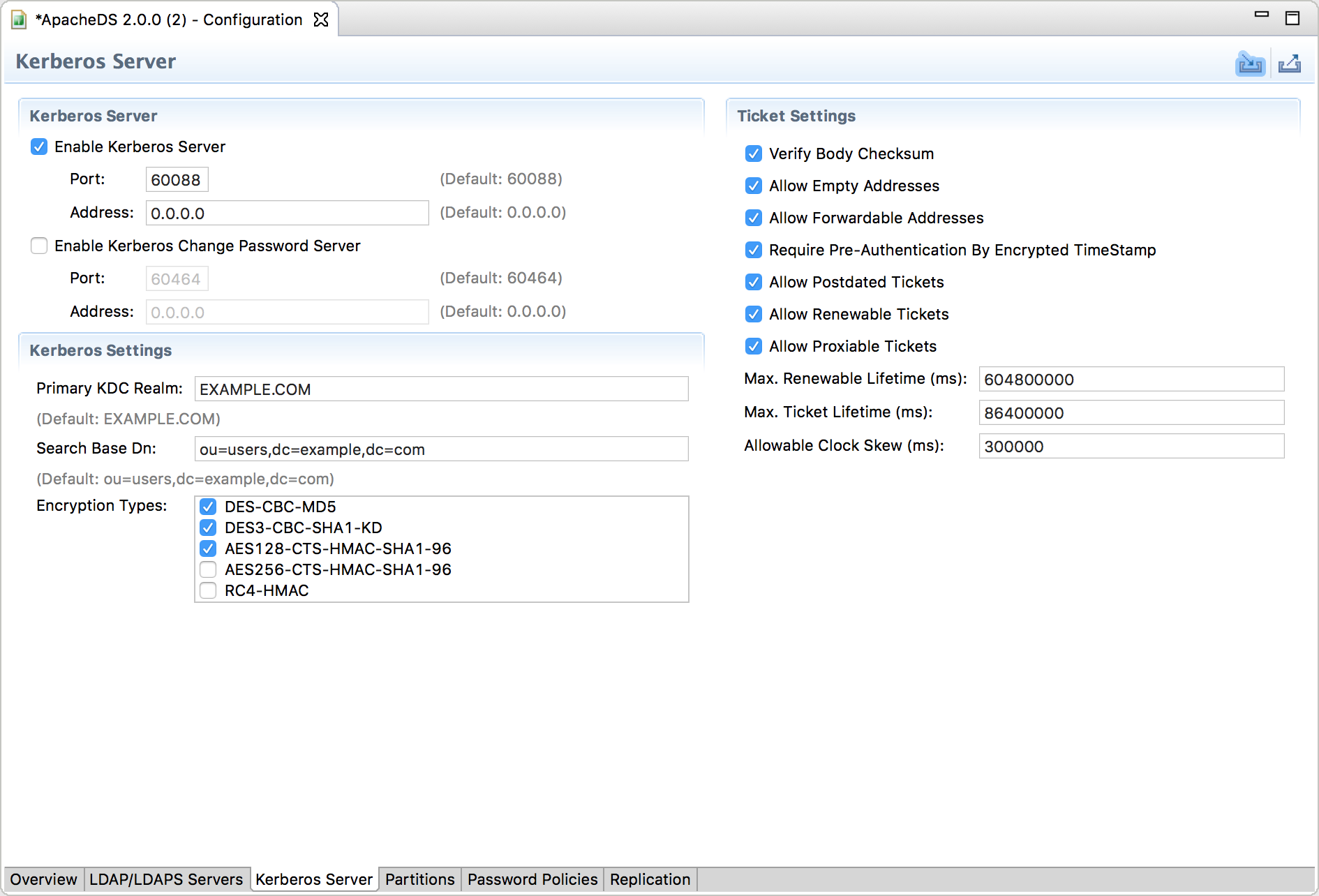

Now set the "KAFKA_OPTS" system property with the JVM arguments: Create a file called "config/client.jaas" with the content:Ĭom.Krb5LoginModule required refreshKrb5Config=true useKeyTab=true keyTab="/path.to.kerby.project/target/client.keytab" storeKey=true principal="client" Įdit *both* 'config/producer.properties' and 'config/consumer.properties' and add: To make the test-case simpler we added a single principal "client" in the KDC for both the producer and consumer. bin/kafka-topics.sh -create -zookeeper localhost:2181 -replication-factor 1 -partitions 1 -topic testĤ) Configure Apache Kafka producers/consumers.bin/kafka-server-start.sh config/server.properties.

Now we can start the server and create a topic as follows:

.config=/path.to.kafka/config/kafka.jaas.Again, we need to set the "KAFKA_OPTS" system property with the JVM arguments: For "SASL_SSL" please follow the keystore generation as outlined in the following article. We will just concentrate on using SASL for authentication, and hence we are using "SASL_PLAINTEXT" as the protocol. listeners=SASL_PLAINTEXT://localhost:9092.Now edit 'config/server.properties' and add the following properties: The "Client" section is used to talk to Zookeeper. bin/zookeeper-server-start.sh config/zookeeper.propertiesĬreate 'config/kafka.jaas' with the content:Ĭom.Krb5LoginModule required refreshKrb5Config=true useKeyTab=true keyTab="/path.to.kerby.project/target/kafka.keytab" storeKey=true principal="kafka/localhost".This can be done by setting the "KAFKA_OPTS" system property with the JVM arguments: Now create 'config/zookeeper.jaas' with the following content:Ĭom.Krb5LoginModule required refreshKrb5Config=true useKeyTab=true keyTab="/path.to.kerby.project/target/zookeeper.keytab" storeKey=true principal="zookeeper/localhost" īefore launching Zookeeper, we need to point to the JAAS configuration file above and also to the nf file generated in the Kerby test-case above. authProvider.1=.auth.SASLAuthenticationProvider.Edit 'config/zookeeper.properties' and add the following properties: Kafka and extract it (0.10.2.1 was used for the purposes of this Random port number in the target directory. The KDC each time, and it will create a "nf" file containing the Kerby is configured to use a random port to lauch It uses Apache Kerby to define the following principals: To run it just comment out the "" annotation on the

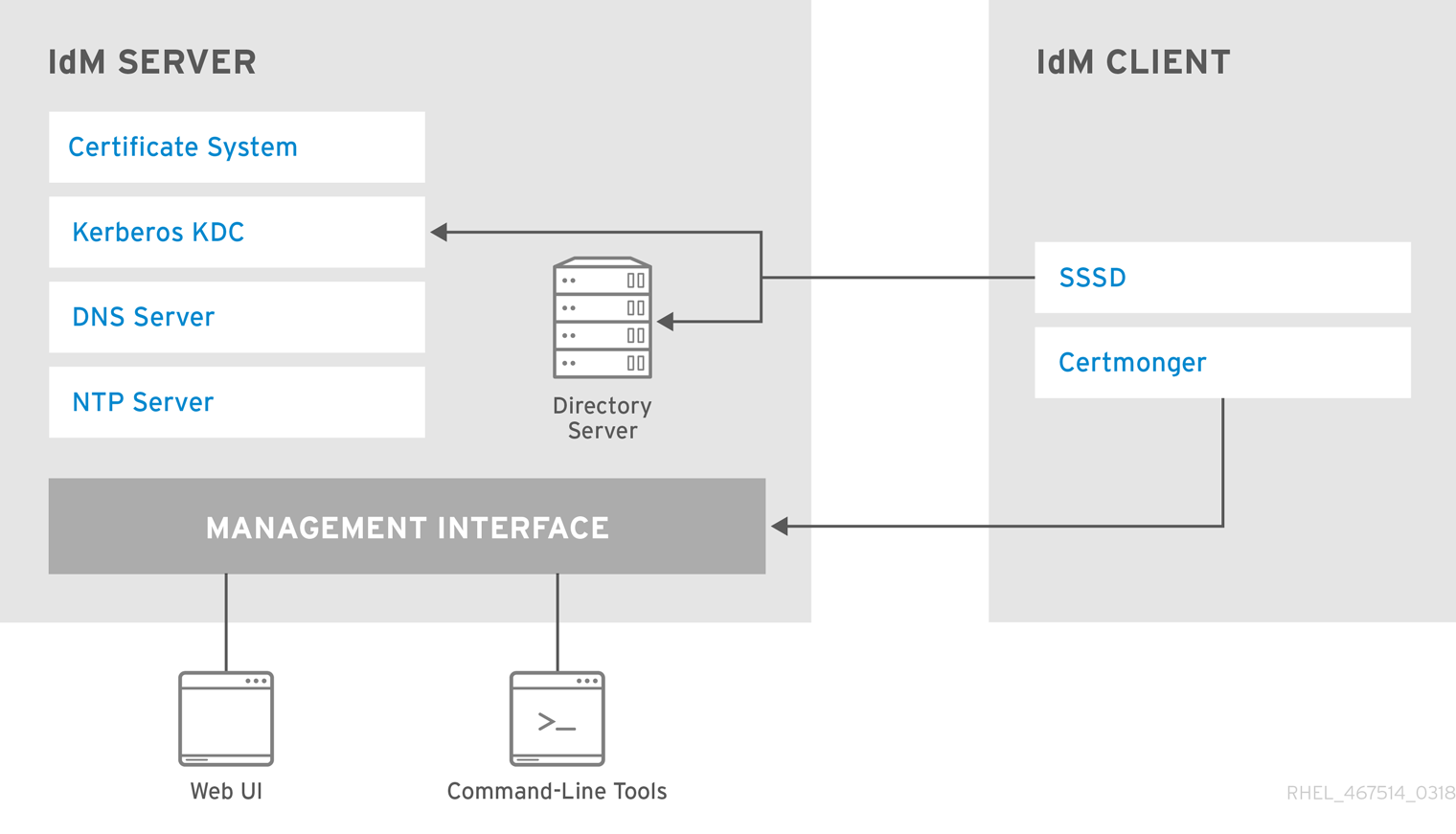

The KDC is a simple junit test that is available here. Various big data deployments, such as Apache Hadoop etc.

#APACHE DIRECTORY STUDIO KERBEROS SASL PRINCIPAL HOW TO#

In this post I will look at how to secure Apache Kafka using Kerberos, using a test-case based on Apache Kerby. Recently I wrote another article giving a practical demonstration how to secure HDFS using Kerberos. The articles covered how to secure access to the Apache Kafka broker using TLS client authentication, and how to implement authorization policies using Apache Ranger and Apache Sentry.

#APACHE DIRECTORY STUDIO KERBEROS SASL PRINCIPAL SERIES#

Last year, I wrote a series of blog articles based on securing Apache Kafka.